A Latency Budget for a Profitable Solana Copy-Trading Bot (Slot → Bundle in 400ms)

A Solana copy-trade bot is a latency stack. If you don't know where your milliseconds are going, you're not profitable — you're just hoping the slow parts aren't where the other guys are fast. This post breaks the stack apart, stage by stage, with real numbers.

Why 400ms Is The Magic Number

Solana slots are ~400ms today. If you haven't submitted your bundle within a slot of the original KOL trade, you're going to land in block N+1 at the earliest — and on a popular Pump.fun meme launch, block N+1 is where the amateurs play. You're buying into pump momentum that the on-time bots are already exiting.

So the budget is: from the moment the KOL's trade lands in a slot, to the moment your signed bundle is in Jito's mempool, you want p50 < 400ms. p99 is what you care about on laggy days.

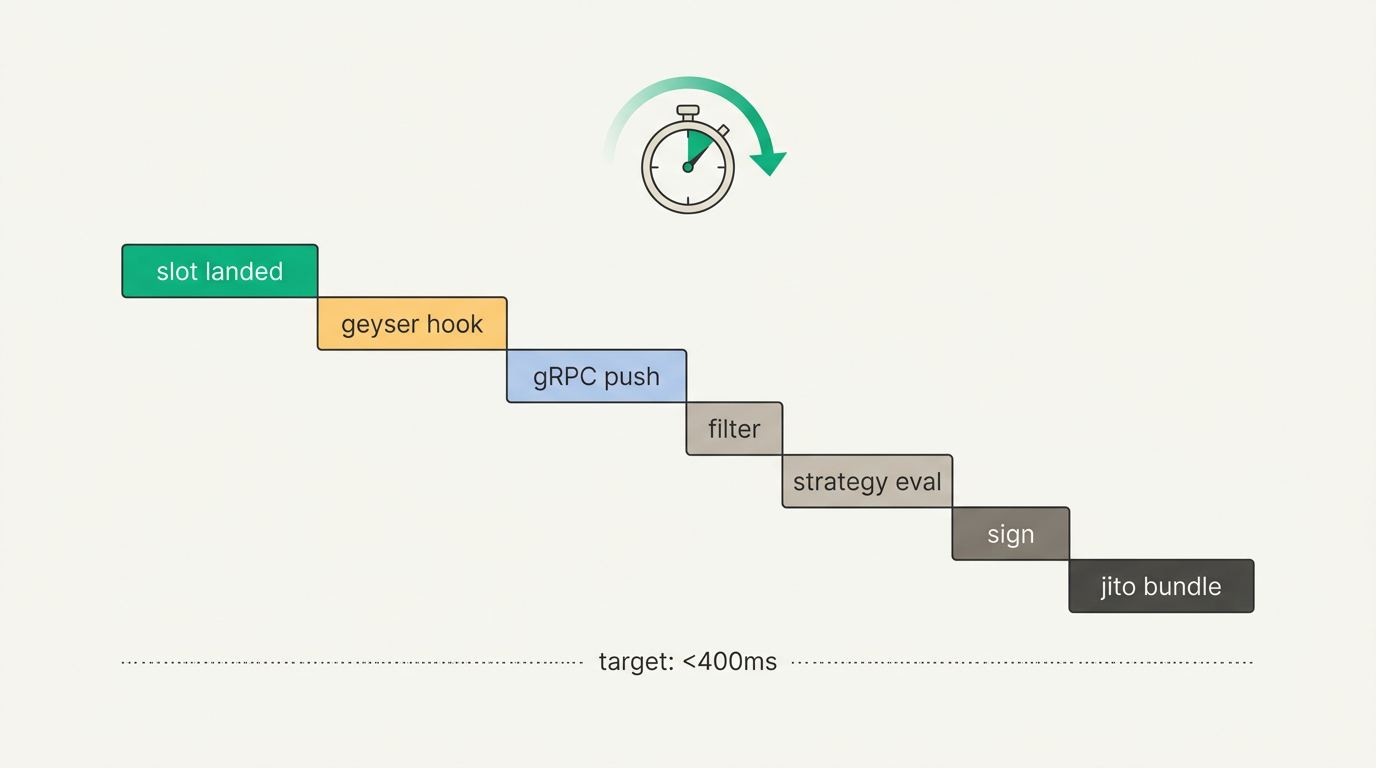

The Seven Stages

Every copy-trade bot has roughly the same seven stages, regardless of stack. Here's what they look like:

| # | Stage | What happens |

|---|---|---|

| 1 | Slot landed | Validator confirms the transaction containing the KOL's trade |

| 2 | Geyser hook | on_transaction fires inside the validator process |

| 3 | gRPC push | Data serialized and pushed to your subscribed client |

| 4 | Filter & parse | Your matcher checks the watchlist and decodes the instruction |

| 5 | Strategy eval | Size the copy trade, pick slippage, pick priority fee |

| 6 | Sign | Build versioned tx, sign with private key |

| 7 | Jito bundle | Ship the signed bundle to the Jito block-engine |

Measured p50 / p99 Per Stage

These are real numbers from Subglow's own copy trader, measured over a week of Pump.fun traffic with an AMS-colocated gRPC subscription and a browser-side signer:

| Stage | p50 | p99 | Where it comes from |

|---|---|---|---|

| Slot → Geyser hook | 3ms | 8ms | Validator internal; not tunable from outside |

| Geyser → gRPC client | 30ms | 80ms | AMS → client network + pre-parse |

| Filter & parse | <1ms | 5ms | Rust matcher, pre-parsed JSON helps here |

| Strategy eval | 5ms | 25ms | Jupiter quote for non-Pump.fun mints dominates |

| Sign (browser) | 80ms | 180ms | Versioned tx build + ed25519 sign |

| Bundle submit | 50ms | 140ms | Browser → backend → Jito AMS |

| Total | ~170ms | ~440ms |

Stage 1 + 2: Slot → Geyser (Not Tunable)

This is validator-internal. Your only lever is picking a gRPC provider whose validator is on healthy hardware and whose Geyser plugin isn't backpressured. If your provider's p50 slot-to-hook is >20ms, they're overloaded or their machine is slow. Test this with a dummy subscription on blockSubscribe and compare slot timestamps.

Stage 3: Geyser → gRPC Client (Colocate Aggressively)

This is the single biggest chunk of wall-clock time you can optimize. Two levers:

- Physical colocation. AMS → Frankfurt is ~10ms. AMS → NY4 is ~80ms. AMS → Tokyo is ~220ms. If your bot runs in Frankfurt, insist on a Frankfurt gRPC endpoint. Providers that only offer one region are hurting you.

- Pre-parsed output. Raw Yellowstone protobuf hands you Borsh-encoded instruction data. You have to decode the protobuf envelope, then Borsh-decode the Pump.fun/Raydium/Jupiter instructions before you can act. Pre-parsed providers (Subglow) do this on their side so your matcher goes directly from bytes to struct. On a busy Pump.fun slot this saves 5–15ms.

Stage 4: Filter & Parse (Rust)

If you're writing in TypeScript/Node, this stage can blow up to 20–50ms during a market spike because V8 GC pauses during high allocation pressure. Port the hot path to Rust and it's single-digit ms or better. The pre-parsed path on Subglow cuts this further because Pump.fun/Raydium/Jupiter instructions arrive as structured JSON, not raw Borsh.

Stage 5: Strategy Eval

For Pump.fun mints, strategy is cheap — the bonding curve price is deterministic from the mint's virtual reserves, so you don't need to ask Jupiter. Just do the arithmetic inline.

For Raydium or Jupiter mints, you need a route quote. That's a Jupiter API call with ~10–40ms overhead depending on region. Best practice: pre-fetch routes for watchlist tokens in the background, so when an intent arrives, you have a cached route ready.

Stage 6: Signing

If you have a server-side signer, this is 1ms. If you have a browser signer (non-custodial, like Subglow), it's 80–180ms because you're doing a WebSocket round-trip to the browser and then back. This is the architectural cost of keeping the user's keys out of your database.

On the browser side itself, the sign operation is fast — nacl.sign is under 1ms. What eats the time is versioned transaction build (ALT lookups, compute unit limit simulation, priority fee calc). Do as much of this as possible on the backend and ship the browser a mostly-built transaction template.

Stage 7: Bundle Submission

Submit as a Jito bundle, not a sendTransaction. Bundles land via the Jito block-engine which has its own fast path. A regular sendTransaction takes a trip through the mempool and can be held back by network congestion.

Your Jito endpoint region matters too: AMS is where most block-engine action is. If your bot submits from US-East but the block-engine is AMS, that's 80ms of transatlantic RTT you didn't need to pay.

What A Bad Stack Looks Like

We see this all the time in user churn conversations:

- Yellowstone provider in US-East, bot in EU: +80ms baseline

- Raw protobuf, TypeScript matcher with Borsh decoding: +30ms

- Custodial signer behind 3 proxies: +200ms on a spike

sendTransactionto a random RPC: +150ms and frequent drops

Total: ~800ms p50, ~2s p99. Two slots of delay. You'll catch the good trades about half the time.

The Clean Stack

- Subglow gRPC in your region (AMS or Frankfurt for most of EU, NY4 for US-East)

- Pre-parsed JSON (skip the Borsh step entirely)

- Rust or highly optimized TS matcher (avoid V8 GC during bursts)

- Cached Jupiter routes for watchlist tokens

- Backend-built, browser-signed versioned tx (non-custodial) or server-signed (custodial)

- Jito bundle submission from the same region as the block-engine

That's how you get under 400ms p50. Anything else is bonus performance — at 170ms p50 you're ahead of the pack; at 80ms p50 you're using the same stack Subglow runs internally.

Related Reading

Ready to try it?

Get your API key and start receiving filtered data in under 5 minutes. Free tier available.

Get started →